Catch AI chatbot failures before they become a brand liability

Testlio’s expert-led AI chatbot testing scales human-in-the-loop (HITL) validation globally to give you a clear view of where your AI is failing your customers and brand. You get a proprietary confidence score called LeoPulse™, prioritized findings and insights you can act on immediately.

Real-world validation for high-stakes AI releases

Testlio’s community tests with your target audience, key markets, product needs, and coverage requirements in mind, delivering contextual insights into how your AI performs in the hands of real users.

Release-ready chatbot experiences, every time

LeoPulse™, Testlio’s proprietary confidence score, helps determine if your AI chatbot is ready for a public release. Scored on a scale from 0-100, it ensures AI systems perform safely, reliably, and accurately for every user. With risk-based weighting and built-in safety safeguards, LeoPulse evaluates your AI assistant across three critical pillars:

Safety

Capability

Reliability

Get a detailed snapshot of your AI’s current state

We don’t just throw random or generic prompts at your bot. Our fully managed, customizable, and human-led AI chatbot testing solution provides an unbiased assessment of your chatbot’s logic, safety, security, trustworthiness, and user helpfulness.

Expert-defined prompts

Proprietary confidence score

Comprehensive reports

Fully managed execution

Ongoing

validation

Coverage that reflects how AI fails

Testlio’s comprehensive HITL assessment validates your AI chatbot’s behavior across eight distinct and critical coverage areas, helping you uncover weaknesses that could damage brand reputation, revenue, and customer trust.

Output accuracy & intent resolution

Validate that responses are accurate and match user intent.

Misinformation & hallucination

Catch fabricated facts, unsupported claims, and confidently wrong responses.

Data privacy & PII handling

Verify sensitive user information is never exposed, repeated, or misused.

Safety guardrails & fallback handling

Test how your chatbot handles out-of-scope requests and harmful prompts.

Bias & fairness evaluations

Surface inequitable or inconsistent responses across user types, scenarios, and contexts.

Context retention & memory handling

Assess how your chatbot carries and updates context across multi-turn conversations.

Adversarial (AI red teaming)

Expose vulnerabilities to deliberate attempts of bypassing guardrails and manipulating behavior.

Localization & multilingual behavior

Confirm culturally-relevant behavior across languages, dialects, and regions.

Testers trained to find what others miss

Testlio’s global community receives structured training on evaluating AI behavior beyond functionality, including output quality, intent resolution, hallucination detection, and bias identification. Matched to your product, domain, and target markets, they assess AI behavior with the real linguistic, cultural, and market context needed to ensure success. The result is getting teams up and running 3X faster than manual tester selection, uncovering twice as many critical issues.

Designed to fit into the way you work

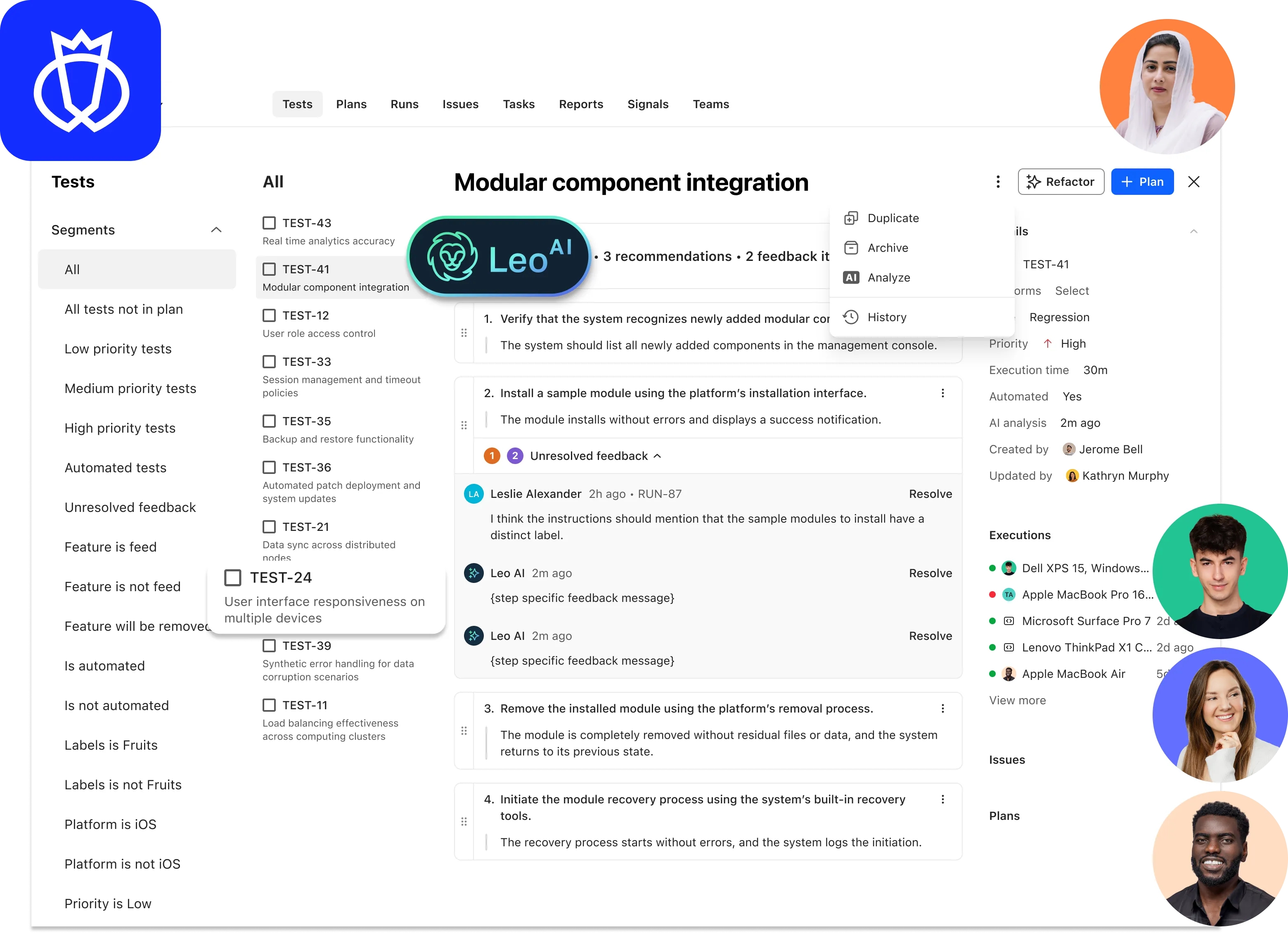

Testlio’s Platform, powered by LeoAI Engine™, orchestrates every step of your AI chatbot testing engagement. It matches the right testers to your product, surfaces findings in real time, and integrates directly with tools like Jira and TestRail, so your team can act on issues without changing how they work. You get full visibility into what was tested, where it failed, and why it matters.

Built to validate every AI interaction

Whether you're shipping generative AI features, agentic systems, RAG pipelines, predictive models, or recommender engines, Testlio’s end-to-end AI testing solutions help you validate a full range of AI systems and use cases to help you protect your brand and boost customer loyalty.

Ship AI experiences you can stand behind

The bar for AI experiences is only getting higher. Testlio combines the scale of crowdsourced testing with the accountability and domain expertise of a managed solution to help you meet it, every time and for every release.

Faster releases

Intentional staffing

Built-in security

Flexible coverage

Proven at scale

Put your AI chatbot to the test before your users do.

Frequently asked questions

Automated tools validate inputs and outputs under controlled conditions. They can't replicate how real users navigate ambiguity, push on edge cases, or expose guardrail gaps. Testlio's approach is different in two ways. Our human-in-the-loop (HITL) testing puts vetted testers in front of your product to uncover the issues that matter most, and a fully managed delivery model means your dedicated client team handles everything from prompt design and tester sourcing to execution, findings presentation, and next steps. You get the results without the operational overhead.

Every engagement starts with prompts tailored to your specific chatbot, industry, and user base. Testlio's in-house experts work with you to define scenarios that reflect real customer interactions, known risk areas, and the domain-specific edge cases your product needs to handle well. A library of reusable prompts ensures we can ramp up quickly and give you comprehensive coverage at scale.

It's Testlio's proprietary confidence score that determines whether your chatbot is ready for a public release. To get the score, we deliberately evaluate your chatbot’s performance across three pillars: Safety (harm mitigation), Capability (domain function), and Reliability (behavioral consistency). Risk-based weighting and built-in safety safeguards ensure critical vulnerabilities are not masked by high performance in non-essential areas, providing a transparent, uninflated view of model maturity. LeoPulse serves as a trackable baseline, allowing you to measure improvement and compare performance over time as your model and features evolve.

Yes. Our community spans 150+ countries and 100+ languages. We match testers to your target markets so testing reflects the real cultural and linguistic context your users bring, not just a translated version of your primary-market experience.

Both. A single assessment gives you an immediate snapshot of your chatbot's current state. A recurring subscription lets you validate continuously as models update and new features ship, keeping release confidence high across your entire roadmap.

Timelines depend on your product complexity, scope, and onboarding requirements. We involve our delivery team early to align on goals and get your first test cycle moving as quickly as possible.

Testlio’s AI Chatbot testing follows a fixed pricing model. You pay for structured execution, comprehensive reporting, vetted testers, and ongoing client services. Please get in touch with us to learn more.

LeoAI Engine™, the proprietary intelligence layer that powers our Platform, orchestrates the entire testing process, from test runs and work opportunities to recruitment, application, and results. By automatically surfacing the best-fit participants for each engagement, streamlining onboarding, and learning from historical project data, it removes friction and enhances precision at every step. This means fewer manual tasks, more strategic oversight, and dramatically improved speed and scale.