De-risk every AI release before it goes live

Reduce hallucinations, biases, and vulnerabilities in your AI-powered apps and features. Testlio’s managed crowdsourced model embeds vetted experts into your AI testing workflows so you can deliver safe, reliable experiences for every user, in every market.

Test global releases with real-world complexities

Your AI’s performance defines your product experience

When AI gets it wrong, it can mislead users, show bias, or create unsafe outputs that harm your brand. Testlio’s crowdsourced AI testing adds the scale, expertise, and real-world diversity your internal teams need to uncover hidden issues before release.

Protect velocity

Detect and fix critical AI issues at scale without slowing releases or burning out your team.

Mitigate risk

Validate your system for hallucinations, bias, outdated or toxic outputs, and model drift over time.

Stay compliant

Meet global regulations, privacy standards, and ethical guidelines in key target markets.

Launch confidently

Ensure consistent and reliable performance across languages, cultures, and user contexts.

Test at scale

Simulate adversarial prompts, misuse scenarios, and multi-agent interactions in real conditions.

Everything your AI needs to perform everywhere

We don’t just check if your AI works. We validate how it behaves. From core functionality to those rare edge cases that only appear in the real world, Testlio brings a human-in-the-loop approach to AI testing to ensure your product wins in every market.

Our AI testing services include:

Red teaming

Bias testing

Stability testing

Context retention

Generative AI testing

RAG testing

AI agents & MCP server testing

Predictive model testing

Recommender

testing

Experts who know how AI fails

Our testers understand prompt manipulation, multi-agent coordination, and the unpredictable ways AI can behave. They replicate real-world conditions, challenge assumptions, and push your models to their limits so you can launch with confidence across markets.

From AI to every customer touchpoint

Validate the broader product experience across devices, regions, platforms, and languages. Our testers validate accessibility, localization, regression, usability, and more to ensure your product works everywhere your customers need it to.

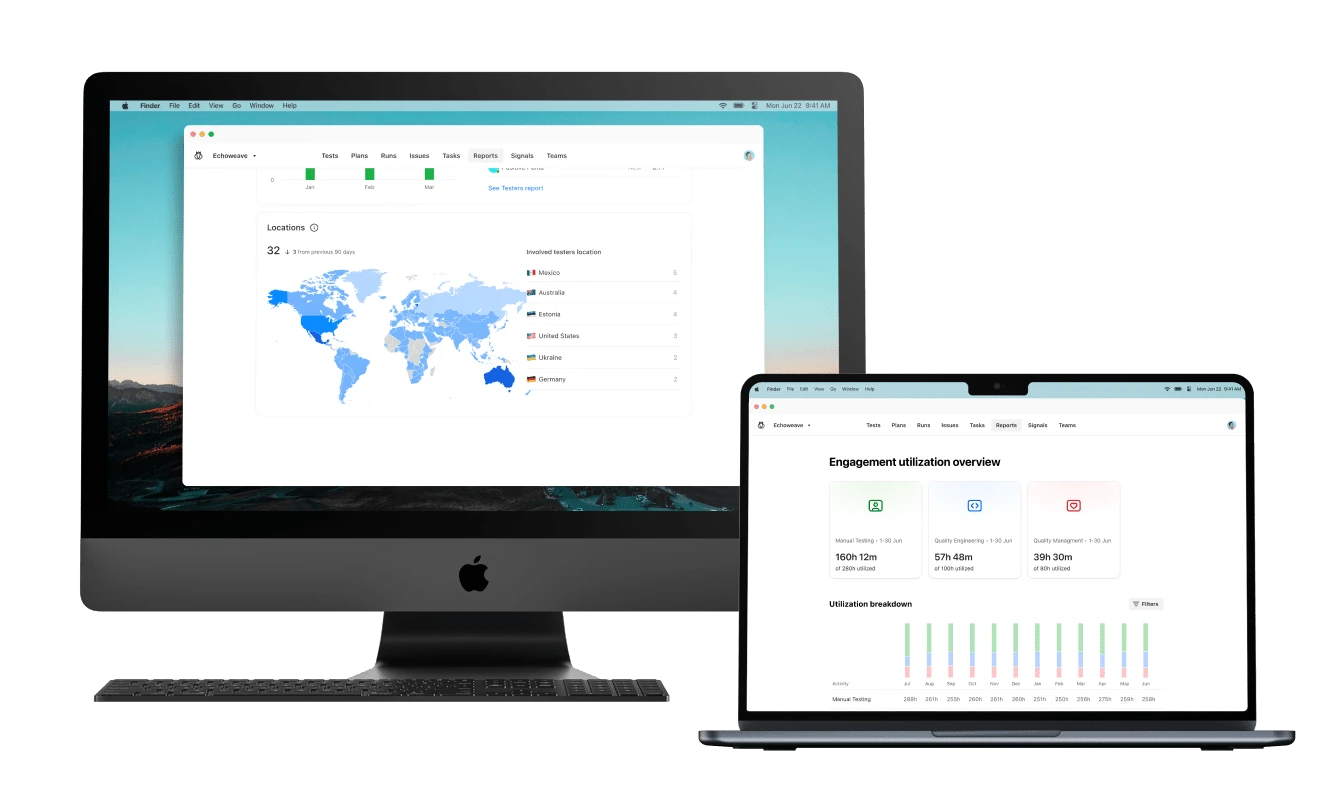

A platform designed for quality at scale

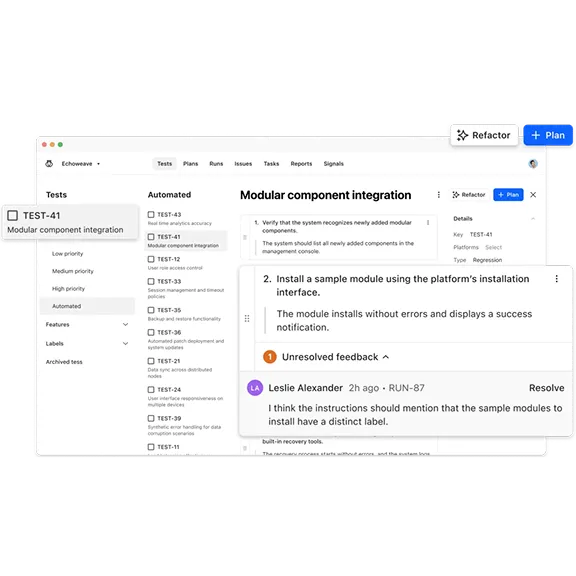

Powered by LeoAI Engine™, Testlio’s Platform brings intelligence to every stage of AI QA. It automatically matches the right testers, orchestrates the entire testing workflow, and surfaces insights in real time. You see exactly who tested what, where issues occurred, and why. With built-in integrations like Jira and TestRail, your teams move faster while staying fully in control.

Real-world AI validation without trade-offs

Get the scale of crowdsourced testing with the control needed for high-stakes AI releases. Testlio embeds domain specialists in real-world settings to uncover weaknesses, validate fixes, and ensure your models work everywhere you launch.

End-to-end pipeline integration

Human-in-the-Loop (HITL) testing

Documentation-driven execution

Unmatched domain expertise

Scale testing as your roadmap evolves

Security and compliance first

Case studies and resources

Ready to ship with speed and absolute confidence?

Frequently asked questions

We specialize in managed crowdsourced AI testing. Vetted AI domain experts are embedded into your QA workflows to test in real-world conditions, at scale. We manage everything from scoping to execution to reporting, so your team can reduce risk, maintain release velocity, and confidently ship AI features to every market you serve.

Pricing is based on test type, complexity, and service level. There are no per-seat licenses. You pay for structured execution, vetted testers, platform access, and ongoing client services.

We test LLMs, multimodal models, recommender engines, predictive systems, RAG pipelines, and agentic AI. Our coverage includes bias detection, adversarial prompt testing, hallucination prevention, model drift monitoring, and cultural fit validation.

It depends on product complexity, testing type, and scope. We involve our delivery teams early to define goals, align on architecture and risk, onboard testers, and then begin initial cycles as soon as scoping and onboarding are complete.

Yes, we are ISO/IEC 27001:2022 certified and operate under strict security protocols for regulated industries. Our testers work within secure environments, validating AI models against the EU AI Act, GDPR, and other global regulations. For more information, visit our Trust Center.

We only work with trained and highly vetted QA professionals. For AI engagements, we match testers based on domain expertise, skills and certifications, market familiarity, and language skills to replicate your real user base.

Yes. Our platform integrates with Jira, TestRail, Slack, and other tools you use for AI development and QA. You can manage cycles, track issues, and collaborate without disrupting your workflows.

LeoAI Engine™, the proprietary intelligence layer that powers our platform, orchestrates the entire testing process, from test runs and work opportunities to recruitment, application, and results. By automatically surfacing the best-fit testers for each engagement, streamlining onboarding, and learning from historical project data, it removes friction and enhances precision at every step. For AI QA leads, this means fewer manual tasks, more strategic oversight, and dramatically improved speed and scale.